Research Topics

Perceptual decisions in a multisensory world

The sensory milieu is complex and dynamic, furnishing multiple streams of behaviorally relevant information, yet also characterized by ambiguity and uncertainty. How do we make sense of this deluge of information and use it to guide our actions?

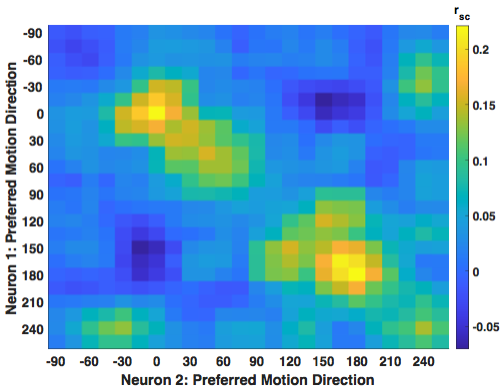

We study this question in the context of self-motion perception and spatial orientation. As we navigate and reposition ourselves within an environment, the relative motion of world-fixed objects and surfaces provides a rich source of visual information about these movements. At the same time, signals from the vestibular system and body proprioceptors offer complementary information about inertial motion and orientation relative to gravity. We think the brain has evolved specialized circuits and computational strategies for combining these signals, but still know relatively little about where and how this occurs.

We use a 6-degree-of-freedom motion platform and 3D visual display to deliver visual and nonvisual self-motion cues while recording and manipulating neural activity in multisensory and decision-related brain areas. The goal is to advance toward a quantitative, mechanistic understanding of how the brain solves the general problem of combining signals from multiple concurrent sources.

Decision confidence and metacognition

Decisions carry with them a sense of certainty, or confidence — the degree of belief that a pending decision will turn out to be correct. Confidence is crucial for guiding behavior in complex environments, yet only recently has it become amenable to neuroscientific investigation. We use behavioral assays to ask monkeys how confident they are in decisions based on visual and/or vestibular cues, then relate neural population activity to these 'metacognitive' judgments with the aid of computational models. Ultimately we want to understand not only how confidence is generated but how it is subsequently used by downstream brain structures to adjust decision policy and strategy.

Sequential decisions during navigation in virtual reality

Laboratory decision tasks typically consider each trial as an independent event, but decisions in the real world are usually part of a hierarchical sequence or ‘decision tree’. The quintessential example is spatial navigation, where each decision about where to move affects subsequent options while also updating an internal model of the environment (cognitive map). We are developing closed-loop behavioral paradigms to study how monkeys select paths in a dynamic virtual ‘maze’ environment, to compare their strategies to standard reinforcement-learning algorithms, and to investigate how neural representations of space are leveraged to guide the sequential decision process.